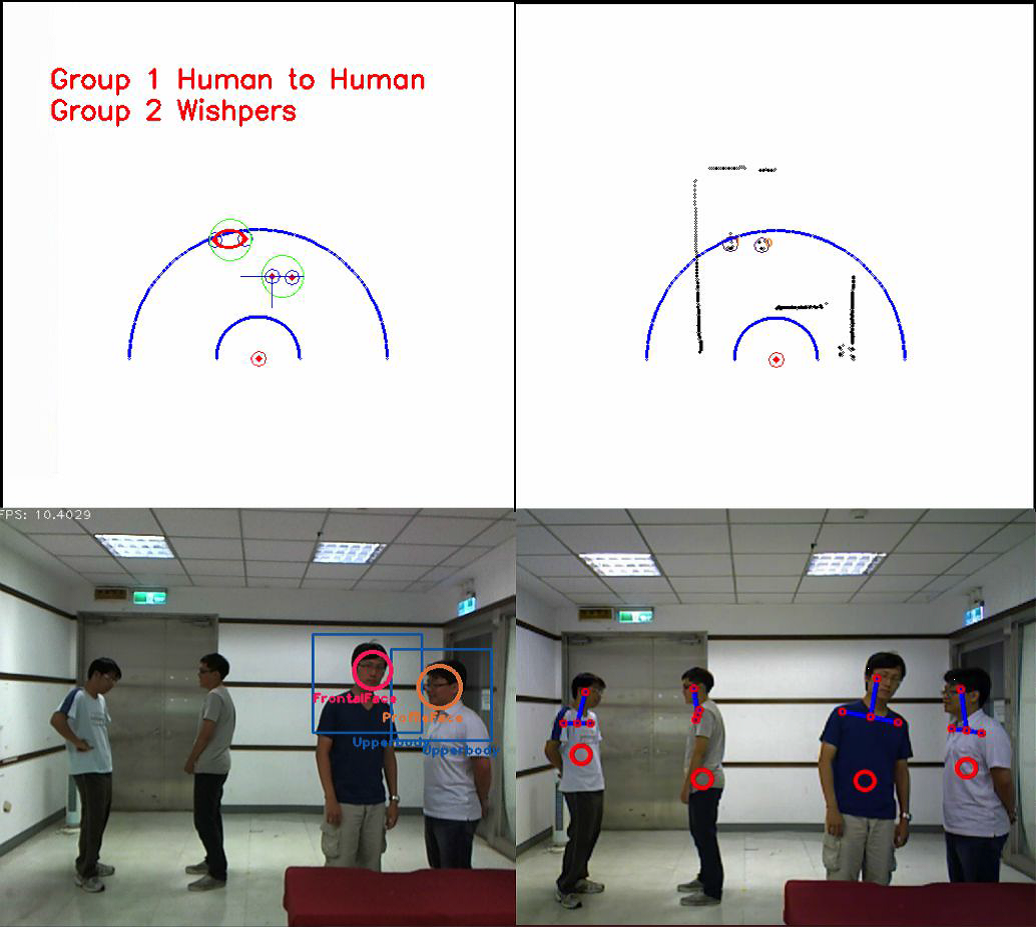

Human-Robot Interaction

[tech report]

Shih-Huan Tseng, Guan-Lin Chao, Chin Lin, Tung-Yen Wu, Li-Chen Fu

Course: Intelligent Robot and Automation Lab (2013 Fall – 2014 Spring)

Description:

We designed an inference model to deal with the uncertainty in multi-human social interactive patterns and task planning. Human’s social interactive patterns are inferred with sensor fusion and sociological understanding of spatial signals; the interactive patterns coupled with environmental context are considered in a Decision Network to plan interaction tasks; and then the robot takes a series of actions, modeled by a task planner, to complete those tasks. The sensor fusion sources are a laser range finder detecting human legs, an ASUS Xtion Pro with OPENNI2 library for human torso detection and a webcam with OpenCV library to detect human faces. We implemented the Decision Network with GUI GeNIe and SMILE library.

Simultaneous Localization and Mapping (SLAM)

[tech report] [demo video]

Guan-Lin Chao, Chuan-Heng (Henry) Lin, Sander Valstar, Chieh-Chih (Bob) Wang

Course: Robot Perception and Learning (2013 Fall)

Description:

We construct a 3D map from RGB-Depth data using Perspective-n-Point camera pose estimation with SURF feature matching and Iterative Closest Point algorithm. The robot was able to localize itself in an unknown environment and construct a rather satisfying map of the environment within reasonable time. The implementation was based on OpenCV and Point Cloud Library.

Storage Robot

[tech report] [demo video]

Shu-Chun Lin, Guan-Lin Chao, Wei-Yu Chen, Li-Chen Fu

Course: Robotics (2013 Fall)

Description:

We build a storage robot which interacts with humans to deposit and withdraw their belongings. A Pioneer 3DX mobile robot, integrated with a UAL5D robot arm and Microsoft Kinect (with embedded microphone), is able to move and navigate, grasp objects and position them using inverse kinematics, and interact with humans by audio (speech recognition and text-to-speech) and vision (human posture tracking and object detection). The implementation is based on OpenNI2 and OpenCV for vision functionality, Google Translate and AT&T Text-to-Speech Demo for speech.

Object Tracking with Mobile Robot

[tech report]

Sheng-Ting Fan, Guan-Lin Chao, Tung-Yen Wu, Li-Chen Fu

Course: Intelligent Robot and Automation Lab (2012 Fall)

Description:

We program a Pioneer 3DX mobile robot with a Microsoft Kinect to track a user-specified moving target. We calibrate the Kinect sensor, use OpenCV library to detect and track the target, and use the ARIA library to control the Pioneer 3DX to approach the target.

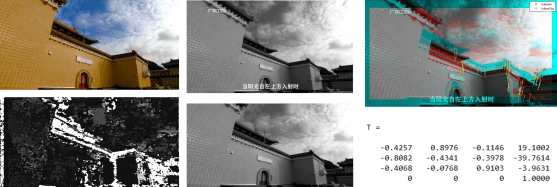

Scene Recognition and User Pose Estimation

[research paper] [demo video]

Yi-Chong Zeng, Ya-Hui Chan, Ting-Yu Lin, Meng-Jung Shih, Pei-Yu Hsieh, Guan-Lin Chao

In International Conference on Human Interface and the Management of Information 2015

Internship: Institute for Information Industry, Taiwan (2014 Summer)

Host: Yi-Chong Zeng

Description:

In this project, I co-developed a mobile app Smart Tourism Taiwan (rebranded as VZ TAIWAN). I implement a GPS receiver and real-time image recognition to identify tourist scenes. I also develop photo depth and camera pose estimation algorithms and employed them along with GPS information to estimate user poses. With this project, I won First Place at the final presentation among 22 graduate and undergraduate summer interns. Our paper was published at International Conference on Human Interface and the Management of Information 2015.

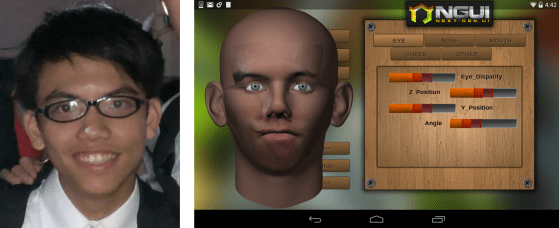

3D Facial Model Construction

[slides] [demo video]

Cheng-Chieh Yang, Guan-Lin Chao, Yen-Chen Wu, Tsungnan Lin

Course: Network and Multimedia Lab (2013 Fall)

Description:

We develop a cross-platform 3D face modeling mobile application SUPER FACE. Using Unity3D, I implement Image Warping based on corresponding keypoints to transform the photo of users and apply the transformed photo patches as textures to 3D face models from Maya.

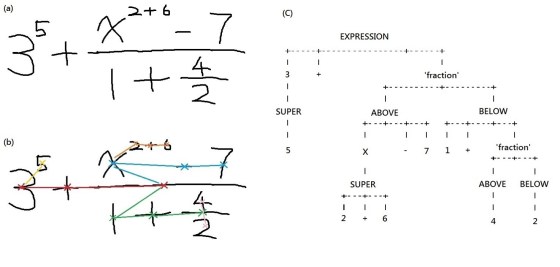

Handwritten Formula Recognition

[tech report] (in Chinese)

Shih-Hsuan Lin, Guan-Lin Chao, Wei-Yu Chen, Chieh-Chih (Bob) Wang

Course: Probabilistic Machine Perception (2014 Spring)

Description:

We implement a handwritten formula recognizer. Individual symbol recognition is achieved by extracting the HOG features, reducing data dimensionality using Principal Component Analysis and training symbol classification model with libSVM, and formula structure analysis was done by a baseline approach. The recognizer is able to recognize complex arithmetic equations, fractions and powers.

Real-Time Rugby Posture Classification

[slides]

Cheng-Yang (Peter) Liu, Guan-Lin Chao, Chieh-Chih (Bob) Wang

Course: Advanced Mobile Robotics (2013 Spring)

Description:

We train a posture classification model using libSVM, which is able to recognize rugby postures in real time on Microsoft Kinect inputs.